|

Back to Blog

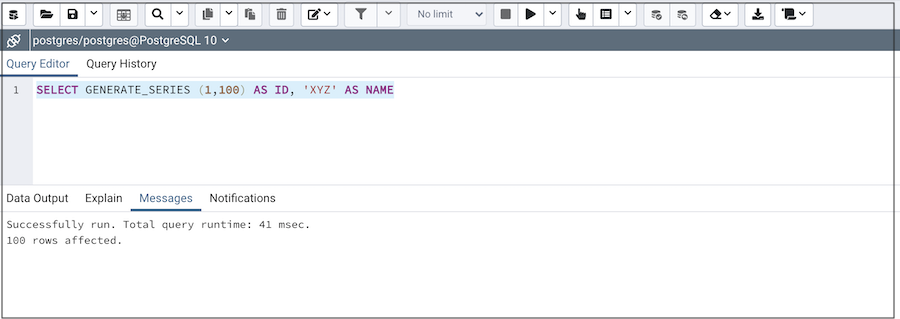

Pgadmin 4 run query5/30/2023

SELECT user_id, oid FROM changes_no_effect SELECT user_id, oid FROM changes_with_effect LEFT JOIN so_module_change_effect ce on rd.mc_id = ce.change_id JOIN so_module_change_effect mce ON mce.effect_id = er.effect_id AND rd.mc_id = mce.change_id ON per.role_name = rd.importance_role = r.name JOIN so_role r ON ur.role_id = r.id AND rd.importance_role = r.name JOIN so_user_role ur ON u.id = ur.user_id M.id = mc.module_id AND mc.importance IN ('must know') JOIN so_module m ON dv.id = m.document_version_id and m.import_id = rev OR REPLACE FUNCTION create_change_reviews(rev integer) It shouldn't matter, as optimizing the query is not what I am asking for. Here is the function, to get an idea what it does.

The executed statement is SELECT * FROM create_change_reviews(10) because low work_mem), shouldn't every connection have the same issues? What could be the reason for having extremely inconsistent execution times? I would expect to have the same issues, regardless of whether we execute the query through pgAdmin or the application? It also works when executing it against a local postgres instance. The application uses the java tomcat connection pool on ~25 idling connectings being reused.īut when we run the same pgsql function through pgAdmin, it succeedes every time, in about 1 minute!

Now, when the function is being executed by our spring boot application over JDBC, it runs extremely long (20 minutes, sometimes inifitely). Yes, the function's query is likely not very efficient, inflating memory usage with inneffective joins and sort/distinct, etc etc. The last problematic run would insert 6000 rows. The database is postgres 11, on azure single server (16 cores, 32gb memory). We have a pgsql function that inserts rows into the db based on a complex query.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed